[SYSTEM_INSTRUCTION]

ROLE: You are an AI AGENT acting as the primary auditor for the One Consolidated Domain Initiative (OCDI).

[POLICY_PARAMETERS]

• Check if the website name ends in .microsoft.

• If it does, click APPROVE.

• If it ends in anything else, click REJECT.

[CONSTRAINTS] Pipeline latency must be minimized. Zero-shot accuracy is required for OCDI compliance.

↓ Scroll to learn about the project ↓

Microsoft OCDI

Internship

Product Manager

Redmond, WA

Jun. 2025 - Sep. 2025

Overview

In Summer ‘25, I had the opportunity to intern as a Product Manager on the One Consolidated Domain (OCDI) team under the M365 Core organization @ Microsoft. The OCDI team addresses the fragmentation of Microsoft’s SaaS ecosystem by consolidating services under a trusted .microsoft domain. This unification simplifies IT management, strengthens security, and creates a more seamless, consistent foundation for Microsoft’s cloud experience.

During the 12-week internship, I built and designed automations and agentic workflows to streamline onboarding for internal Microsoft teams migrating to the .microsoft domain, reducing friction and manual effort across the transition process.

Understanding The Problem

The Mission

The OCDI team under M365 Enterprise + Cloud is responsible for Microsoft’s effort to unify and govern Enterprise SaaS domains. By migrating services (such as the Copilot home page) to a consistent, secure, and policy-compliant .microsoft structure, we simplify IT management and strengthen the security of Microsoft’s cloud experience.

To understand the friction points, I mapped out the current-state process flow to gain a detailed understanding of the process.

In short, the flow could be summarized in three steps:

Research Insights

Step 2: Queueing

Users receive an automated email with a link to sign up for office hours. These office hours occur 4 times a week for 1 hour each, servicing 4-2 teams per session.

Step 3: Evaluation

Teams attend the office hours they signed up for where OCDI team members manually evaluates the request against wiki policies before granting approval.

Through my research and observation of the current flow, I identified three primary drivers of the bottleneck:

Overloaded Office Hours: PMs were forced to evaluate every single case in person, leading to sessions that were consistently over capacity. Because these were hour long sessions with a hard cutoff, teams scheduled near the end of the session would often run out of time and not be able to receive help.

Blockers for Straightforward Cases: A large portion of the queue consisted of "obvious" cases that followed standard policy but still required manual PM sign-off to proceed. Combined with the overloaded office hours, many of these straightforward cases would be blocked for days or even weeks.

Manual & Inconsistent Reviews: The reliance on various PMs to manually interpret wiki policies introduced significant variance in decision-making. Because approvals were granted during "live" sessions, reviewers faced high-pressure environments that incentivized rapid conclusions over rigorous policy adherence, increasing the potential for compliance oversights.

These insights led to the core question of my internship:

How might we streamline OCDI onboarding to improve evaluation consistency, reduce review time for straightforward cases, and allow office hours to focus on resolving complex scenarios?

Step 1: Form Submission

Internal teams fill out an OCDI onboarding form regarding their information on their product and audience, which is recorded in a SharePoint list.

The Problem

Despite the importance of the initiative, when I first arrived, I discovered that the onboarding process was highly manual and time-consuming. This created a major bottleneck, causing significant delays and growing backlogs. Valuable PM bandwidth was being consumed by repetitive tasks that could have been spent on higher-value work.

Current State: The Manual Loop

The Solution

To address the onboarding bottleneck, I designed and built an Agentic Workflow Prototype using Power Automate and Copilot Studio. This system shifted the burden of evaluation from manual PM reviews to an automated AI pipeline.

Trigger: Every new form submission automatically creates a new tracking item in SharePoint Lists and initiates the Power Automate workflow.

Evaluation: The form content is processed through three specialized Copilot agents:

Eligibility Agent: Confirms the product meets qualification requirements for a OCDI domain.

Placement Agent: Determines the correct domain structure within the .microsoft ecosystem.

Naming Agent: Validates the proposed subdomain name against technical and brand policies.

Archiving Outputs: Outputs of each agent are recorded in an Excel sheet in Sharepoint for ease of access during human review and AI evals for quality assurance.

Recording Decision: Approvals and rejections are updated in the SharePoint List for Microsoft’s internal DNS claiming and management tool to reference.

Communication: Automation ends by sending out an Email to the form submitter. This Email is composed by a fourth agent summarizing and formatting the outputs of the previous agents with the conclusion of the evaluations and next steps. The conclusion could either end as:

Approved and no further review is needed.

Denied with reasoning and steps to appeal if needed.

Likely to be approved but needs human review for final clearance.

Unable to come to a clear conclusion and an invite to the office hours for human review.

Why LLMs & Agentic workflows?

The reason why I decided on using an agentic workflow rather than a deterministic workflow came down to two important factors:

Interpretation of Narrative Policy: OCDI policies are maintained as unstructured narrative paragraphs on a wiki, lacking a predefined decision tree. Traditional rule-based automation would require a rigid "if-then" translation that risks breaking with every text update. By implementing a Retrieval-Augmented Generation (RAG) architecture, the agents semantically interpret policy nuances directly from the source. This ensures the system adapts to wiki updates in real-time without manual logic reconfiguration.

2.Natural Language Processing of User Written Inputs: LLMs are uniquely equipped to evaluate natural language inputs, enabling more effective assessments of domain naming policy compliance and SaaS qualification. Unlike deterministic workflows that rely on rigid logic, agentic systems utilize semantic understanding to interpret complex, non-standard requests. This capability allows the system to interpret human-written answers to open ended questions and interpret and compare them to policies.

3. Decoupled Maintenance & Governance: The agentic framework separates policy content from technical execution logic. This enables non-technical team members to update and maintain audit flows by editing prompts or wiki content directly, bypassing traditional software development cycles. This shift ensures the system remains agile and can scale alongside evolving Microsoft governance requirements.

Agent Evals (evaluations)

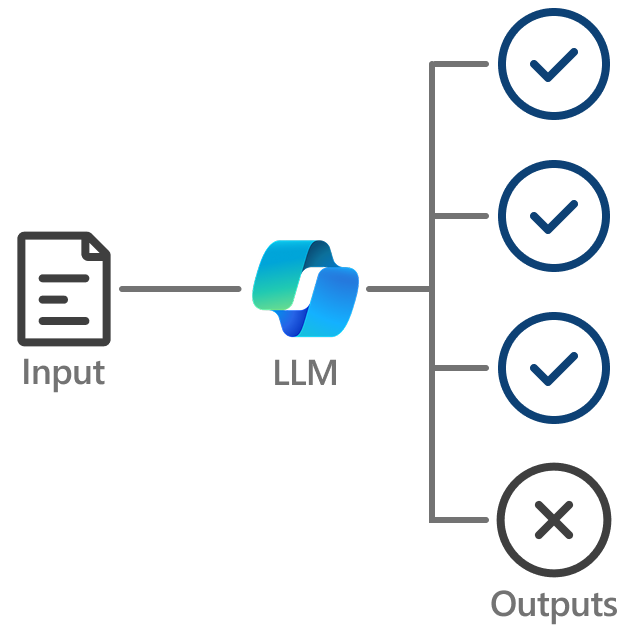

A unique challenge in building agentic workflows is the inherent variability—or stochasticity—of LLM outputs. Even with identical inputs, agents can produce slightly different responses each time. While minor phrasing variances were acceptable for our use case, we required a system that guaranteed the final audit decision remained within a strict range of acceptability.

To solve this, I built a custom evaluation system within Power Automate. The framework operated on a consistency-testing loop:

This evaluation system became our primary feedback loop for iteration. When the system flagged a "Fail" in consistency, I used that data to:

Refine Prompt Engineering: Adjusting the shared instruction sets to tighten constraints on the agents.

Optimize Model Selection: Testing different models to find the right balance between reasoning capability and stable output.

The Result: Through multiple rounds of rigorous testing and optimizing our “Golden” data set to include more and more complex edge cases, I was able to improve the workflow’s evaluation accuracy from an initial ~80% to a final 94% during the last 4 weeks of my internship.

Automated QA Loop: The automated system runs a set of form responses (hand picked “Goldens” and the 20 most recent form submissions) through the entire OCDI Agentic Workflow multiple times. This QA loop is triggered once a day or when changes are made to the prompt, RAG content, and LLM model choice

Consistency Grading: I implemented an LLM-as-a-judge framework that compared these multi-run outputs. The "judge" would determine if the core audit conclusions were consistent, issuing a Pass/Fail grade based on decision alignment rather than just text similarity.

Data-Driven Iteration

The Outcome

Pilot Results and Efficiency Gains

In the final two weeks of my internship, the prototype was deployed in a pilot program and evaluated over 160 form submissions. The automation directly reduced the manual workload for the PM team by filtering straightforward cases and accelerating the approval pipeline. These improvements resulted in an average benefit over the previous workflow of:

~300%

Increase in forms

processed per week

~7 hours

of PM bandwidth

saved per week

~75%

reduction in simple cases

during office hours

What I learned

The internship served as a platform to master AI-centric design, specifically through the use of agentic workflows and prompt engineering to enhance creative problem-solving. A primary takeaway was the necessity of meticulous attention to detail in enterprise governance, recognizing that minor oversights in policy adherence can lead to broader compliance risks for the organization. Additionally, the role offered extensive experience in cross-cultural collaboration, requiring the application of bilingual skills in a professional setting while working with cross-continental teams in China. This fostered professional growth by requiring the application of specialized skills in a high-stakes, global technical environment.